Imagine an underwriter named Alex, assessing a complex loan for a roastery looking to open a second location. The system has flagged the loan proposal as “referred”, meaning that it’s sitting in a grey area where automated rules can’t provide a clear answer. Alex’s task is to assess the affordability of the proposal for this potential borrower and the likelihood that they will repay the loan, while identifying any potential fraud. This could involve scanning months of unstructured data and switching between systems to identify seasonal revenue patterns and hidden debt, and reconciling irregular cash flows from different sources while ensuring the decision is unbiased. All this while managing comms with the introducer and the clock ticking on a tight SLA.

The financial industry is an example of the high impact of AI implementation. Particularly in underwriting, insurance, and banking, executives expect AI adoption to jump from 14% to 70% in the next three years, with up to 30% in productivity gains, according to recent research. But as research also shows, the success of these initiatives depends on a frequently overlooked factor: including people. The question for lenders is no longer if they should use AI, but how to ensure their experts actually trust it.

In recent projects at Clearsky, I’ve had the opportunity to work on the front lines of financial software design, closely collaborating with underwriters and stakeholders to define journeys and interfaces that maximise efficiency and outcomes in financial decisioning. Currently, at Clearsky, recognising that the coming AI transformation isn’t just a technical challenge but also a design one, we are working on AI-powered prototypes, capitalising on our learnings and combining hands-on experience with research into AI design patterns. I’m sharing these learnings to show how we can, with a design-led approach, help design better AI software.

The Human-Centric Approach

Of course, data readiness is a fundamental starting point for the feasibility of any AI transformation, and many risk factors in AI implementation need to be addressed on the engineering front, including data privacy, security, model accuracy, and inclusiveness. We also cannot overlook the societal costs of mis-scoring vulnerable applicants, which can lead to unfairness and significant social impacts. Designing AI is a cross-disciplinary collaborative effort.

On the human-centred front, we need to think about how the user will change their relationship with the system when it becomes a "co-pilot," and how tasks that are not automated will require more cognitively complex, judgment-based work. Highlighting this shift in cognitive load makes the case for augmentation more tangible.

For credit underwriters, the primary friction points are well-known:

- Information overload: Sifting through mountains of data

- Time-consuming fraud detection: the manual "detective work" required to spot misrepresentations.

- Administrative burden: The constant context-switching between tools and brokers

So, what’s the specific value AI can bring to the end user? Could we solve these problems with just more automation?

Traditional tech is highly effective for processing structured data, performing consistent calculations, and enforcing simple, fixed rules (e.g., automatic rejection based on a basic credit score). Having led the design of decision engines, our focus on the design approach was to organise the key information in a layout that allows the underwriter to have as much of the information necessary for decision-making at a glance, and to set rules for prioritising issues.

AI, however, has the potential to analyse vast amounts of data far more quickly than a human could, and to make recommendations based on a variety of financial data points. We can also use AI capabilities to summarise information and present it in a user-friendly way, analyse historical decision-making patterns, provide feedback to underwriters on potential biases in their decisions, and support training and onboarding.

But implementing AI in interfaces comes with risks.

As an example, consider two scenarios. In the first scenario, we see a lack of trust. An underwriter receives an AI recommendation to decline a loan application because of flagged inconsistencies. Without a clear explanation for the AI flag, the underwriter dismisses the recommendation as unreliable, potentially allowing a risky loan to pass through. In a contrasting use case, an underwriter approves a high-value loan based solely on the AI's recommendations, without double-checking, risking financial and reputational losses for the business.

Design Patterns for Decision Support

To move from theory to practice, I’m breaking down specific design patterns to ensure the AI supports the human expert.

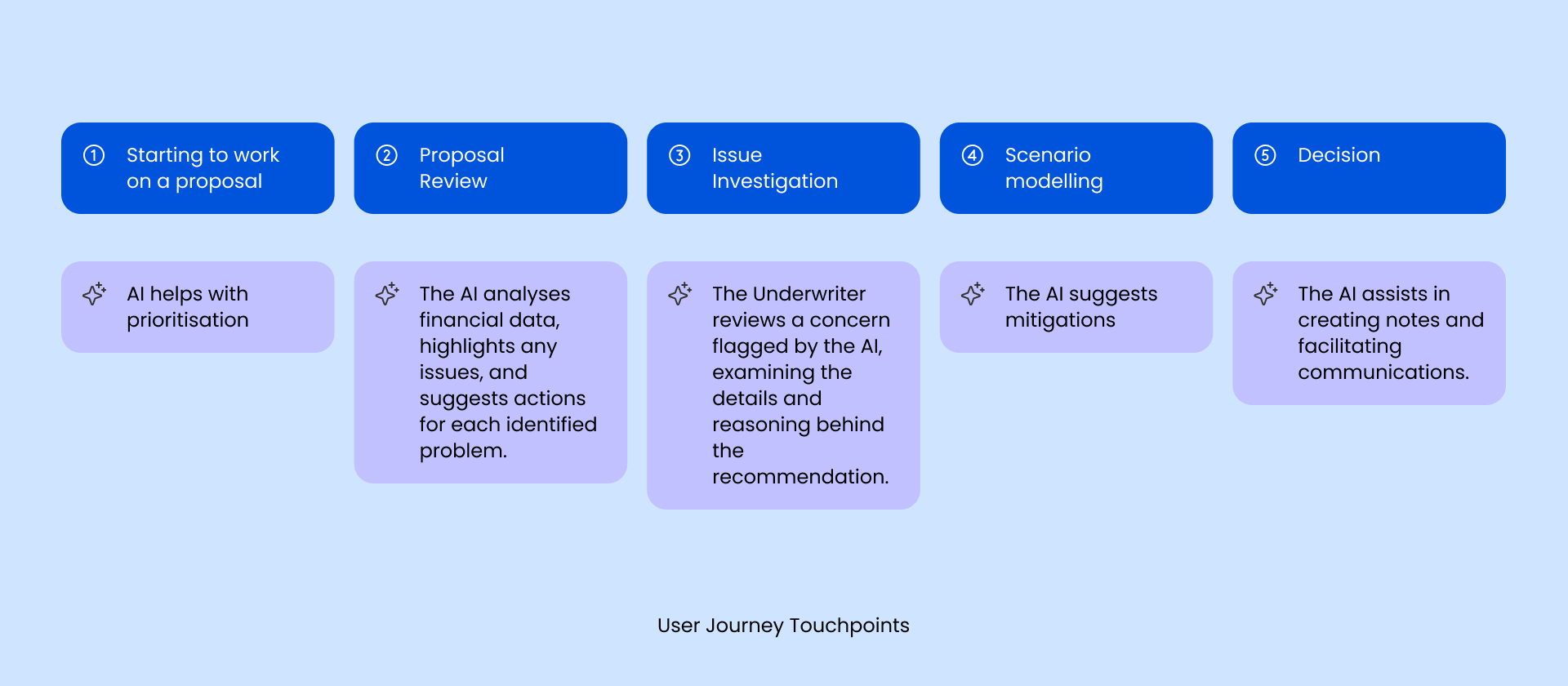

1. Mapping the Experience: Automation vs. Augmentation

In design, journey mapping and task analysis are two of the first tools we use to understand the user’s workflows in depth. Here, we identify the user's goals, needs, and pain points at every step.

Analysing at this point what tasks can be automated or augmented will help us calibrate decision support, explanations, and user control when we design the interface.

- Potential for automation: High-volume, low-risk tasks like document retrieval, data entry, and pre-sorting broker communications.

- Best for augmentation: High-stakes tasks like evaluating repayment propensity, complex risk evaluation, and accepting/rejecting decisions.

The Goal: Reduce the administrative burden so the underwriter can spend more of their time on high-value decisions.

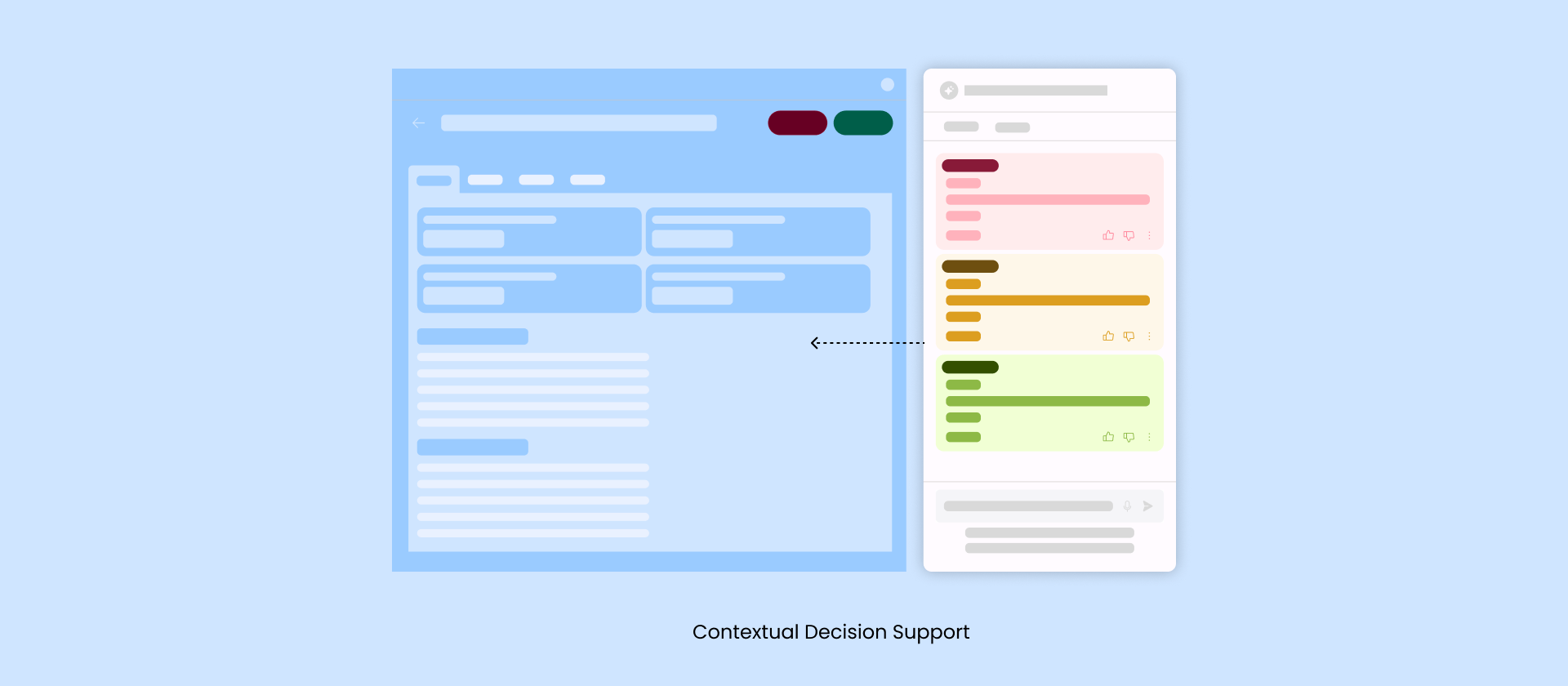

2. Human-in-the-Loop: Contextual Decision Support

The human-in-the-loop approach refers to the calibration between machine decision-making and human intervention. In the context of underwriting, this step is fundamental to ensuring humans remain the decision-makers and avoid the risk that AI could take control in high-stakes financial decisions. By reinforcing the principle that the underwriter makes the final call, we safeguard human agency and prevent perceived automation creep.

Conceptually, that means the AI is an “assistant” or “co-pilot”, not an “expert” or “decision maker” or a “real person”. In the design of the interface, we can reinforce the user flow by separating recommendations from decision-making actions and implementation of:

- Contextual layouts: AI recommendations are presented contextually within the user’s workflow.

- Categorised alerts: Using tiered alerts to highlight urgency, allowing for immediate prioritisation.

3. Explanation Strategy: Building Traceable Trust

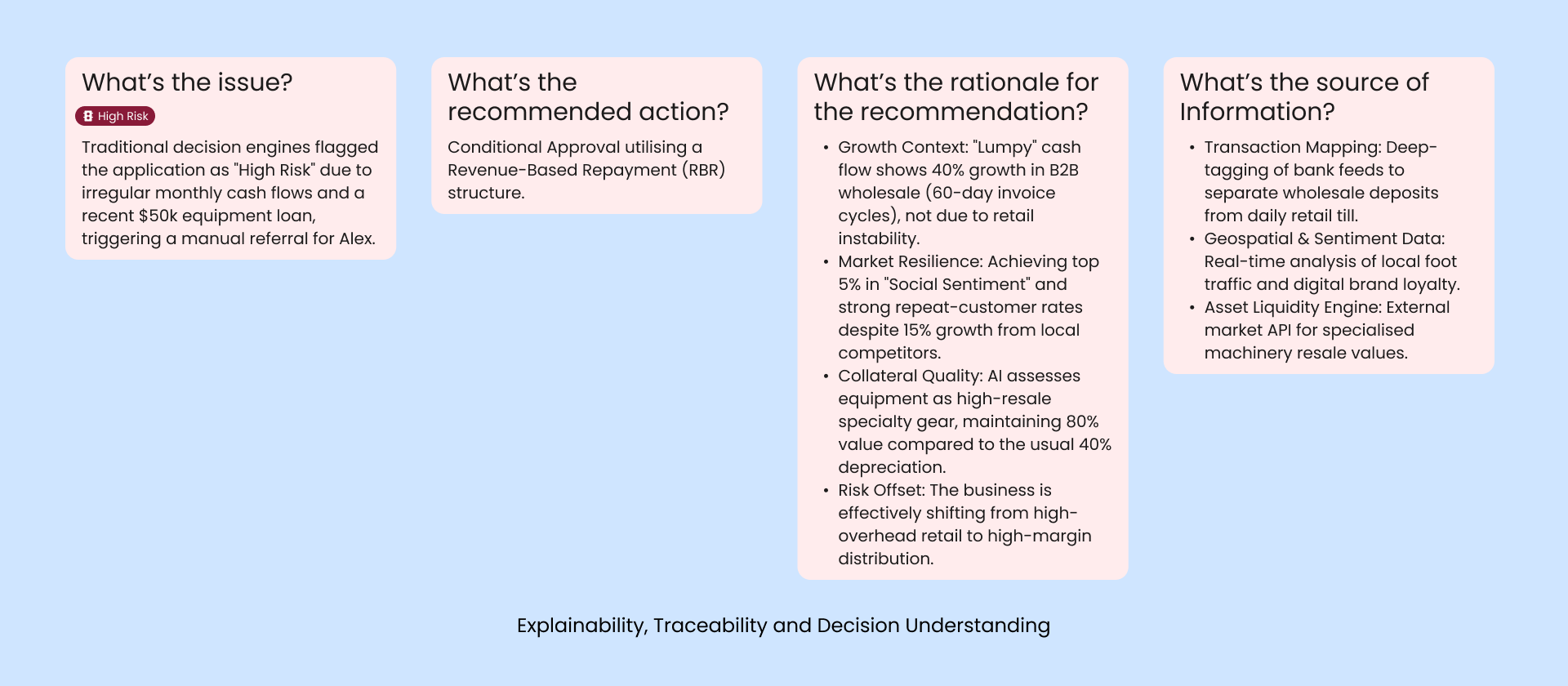

If underwriters don’t understand why the AI flagged a risk, they could ignore it (or worse, follow it blindly). But since credit assessment in some cases is done in minutes, we can’t overwhelm the user with information to process; we must calibrate which explanations they need to see at each point, depending on the risk level associated with that decision.

- Explainability: Beyond simple "risk scores", we should be showing the reasoning behind the AI’s recommendations.

- Traceability: Every AI claim includes a direct link to the source document, allowing for instant verification.

- Decision Understanding: The system provides visibility into the process for reaching recommendations, which should align with the business's and experts' best practices.

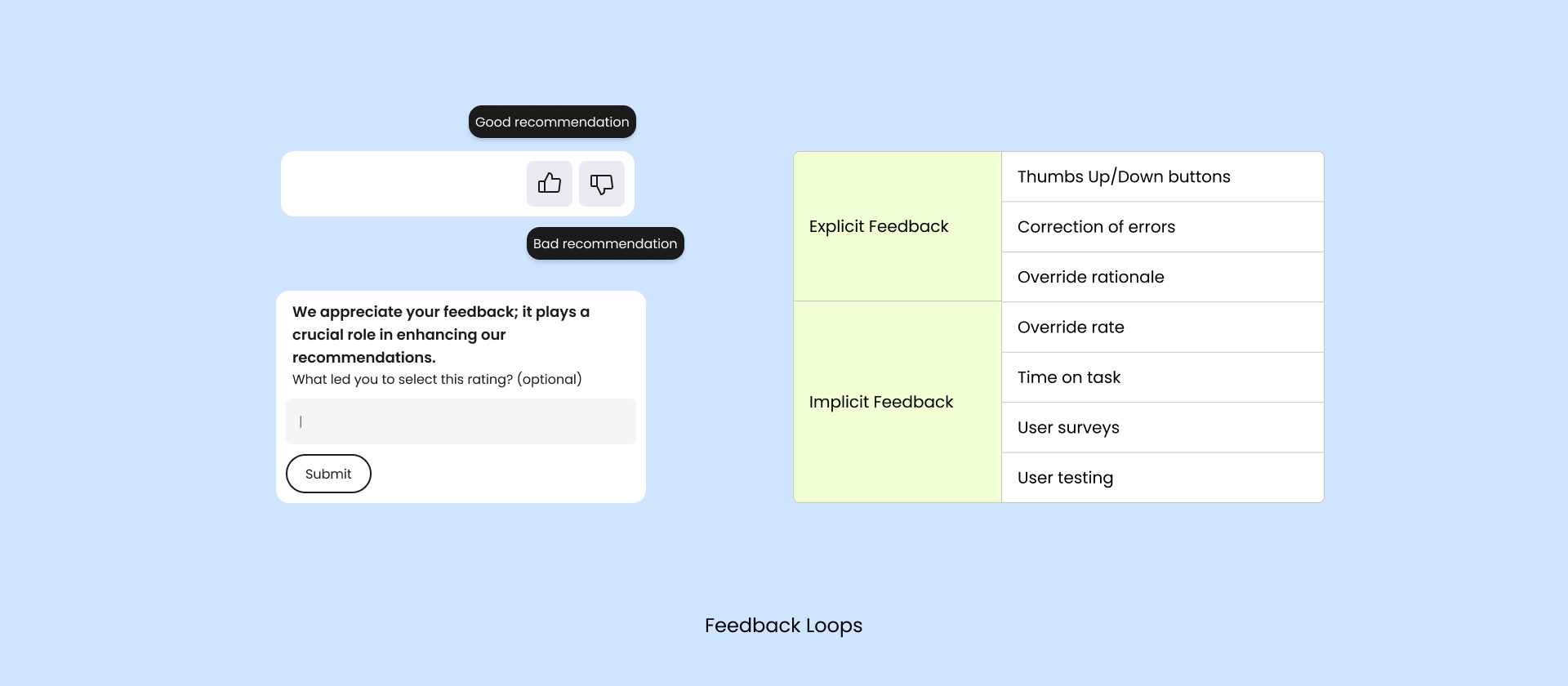

Feedback Loops: Co-Creating with AI

In the case of financial decisioning, we should encourage the user, as an expert underwriter, to correct the mistakes and provide feedback on incorrect suggestions, so the machine can learn and developers can get feedback for system improvement. Highlighting the direct benefits of this process can be motivating.

- Corrective Loops: When an underwriter disagrees with an AI suggestion, the system shouldn’t just "go away" - it should ask for the reason and explain to the user the value of providing feedback. This data is fed back into the model to mitigate bias and improve future accuracy.

Conclusion

AI is disrupting the way we work, with a huge impact on the financial industry. But speed to market shouldn't come at the cost of responsible human-centred design.

By prioritising a human-centred approach including transparency, control, and feedback, we don't just create more efficient software; we create a more accessible and transparent financial ecosystem.

To start applying these principles, design teams can take concrete steps:

1. Conduct workshops to integrate journey mapping and user feedback into the design process. This will allow your team to better align AI tools with user needs and workflows.

2. Develop a prototype incorporating AI recommendations in a user-friendly interface, with a focus on enhancing explainability and user control. By iteratively testing and refining, teams can better bridge the gap between technology and user trust.